Todays Top News

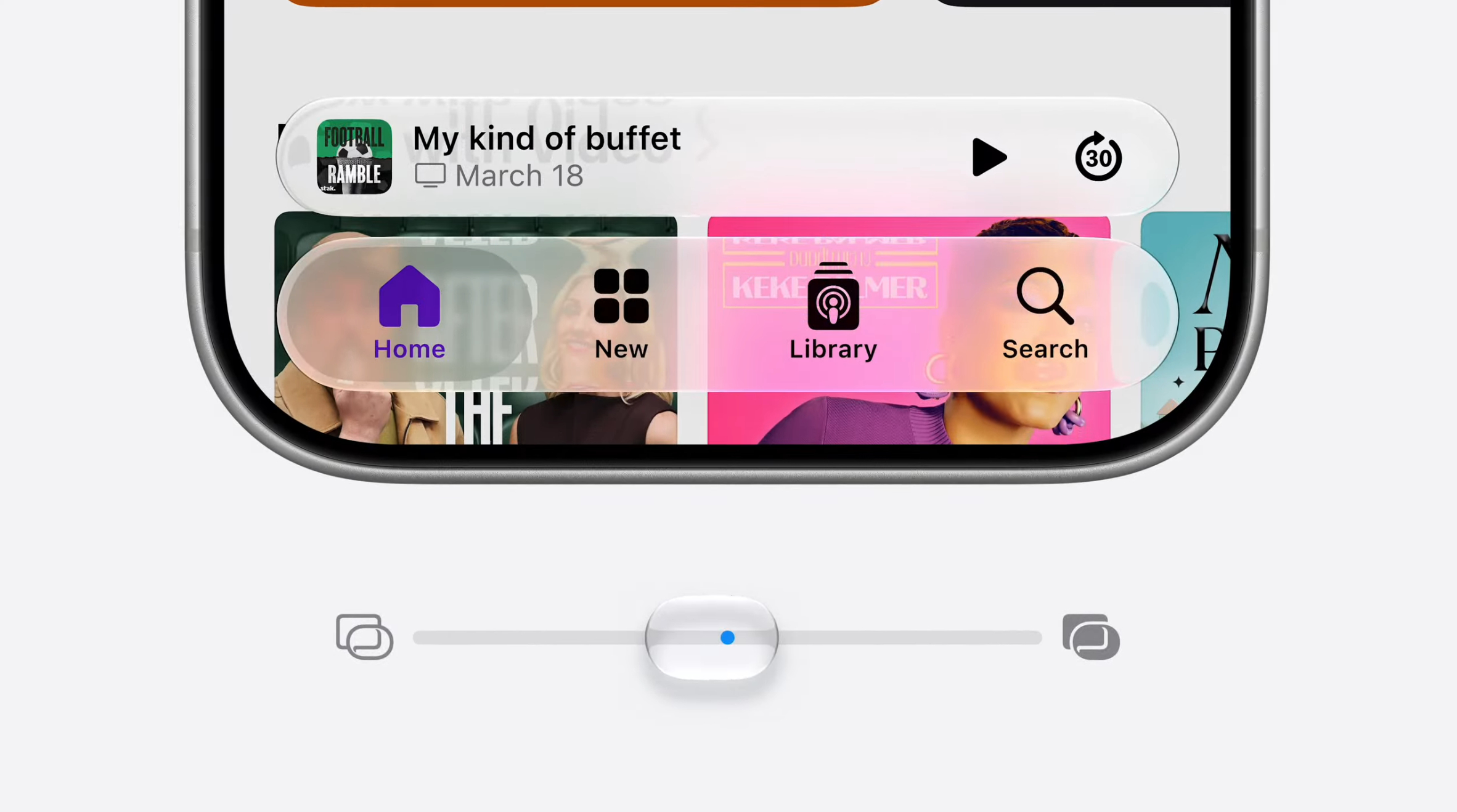

Apple's new Siri lives everywhere: What comes next?

Apple is incorporating a new layer of AI into all its devices, with a focus on Siri. This integration is expected to enhance user experience and bring about significant changes in the tech industry. The update is part of Apple's efforts to expand its AI capabilities and make its devices more intuitive.

/filters:no_upscale()/news/2026/06/pinterest-miqps-url-dedup/en/resources/1duplicateurl-1780112720449.jpeg)